AI video generation is changing how businesses create content. From marketing teams to educators, modern AI video platforms make it possible to turn plain text into professional videos using digital avatars.

But as these platforms scale globally, a critical question emerges:

What happens when growth outpaces control?

Because without the right safeguards, innovation can quickly turn into risk.

⚠️ When Growth Exposes the Cracks

As adoption increases, AI platforms begin to face challenges that aren’t obvious in the early stages.

For many AI video platforms, rapid growth brings several issues to the surface:

🎂 Unverified Users Entering the Platform

Without a robust age-verification system, there was no reliable way to ensure users met legal requirements—creating compliance risks across regions.

🚫 Misuse for Explicit and Harmful Content

Even with usage guidelines, some users attempted to generate:

- Vulgar or adult content

- Offensive narratives

- Inappropriate video scripts using AI avatars

🎭 Digital Avatar Misuse

One of the most critical concerns was the possibility of users creating avatars resembling:

- Celebrities

- Politicians

- Public figures

This opened the door to deepfake misuse, defamation, and legal complications.

🤖 Repeat Offenders and Bot Activity

Users who violated policies often returned by:

- Creating multiple accounts

- Exploiting system loopholes

- Automating content generation

🔓 Security Gaps

Without strong protection layers, vulnerabilities could be exploited to:

- Bypass moderation systems

- Manipulate content workflows

- Access sensitive processes

🚨 Why These Challenges Become Dangerous at Scale

At a small scale, these issues may seem manageable. But as the platform grows, they multiply rapidly.

Unchecked risks can lead to:

- Legal exposure due to non-compliance

- Brand damage from harmful content

- Loss of enterprise trust

- Operational overload from manual moderation

In short, AI platforms without guardrails don’t just grow—they become unstable.

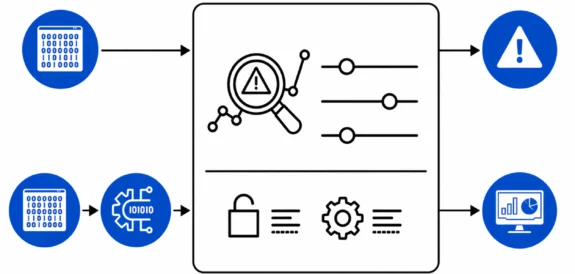

🛠️ The Shift Toward Controlled and Responsible AI

To address these challenges, a structured approach to AI safety became essential.

Instead of reacting to problems, the focus shifted to preventing them at the system level.

This meant redesigning the platform around five key pillars:

- User verification

- Content moderation

- Avatar protection

- Behavior monitoring

- Infrastructure security

🛡️ Building a Multi-Layered Safety System

A combination of AI models and security mechanisms helped bring control back to the platform:

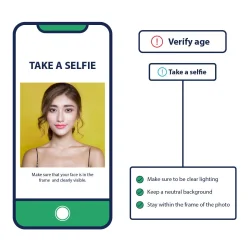

🆔 Intelligent Age Verification

A mix of facial analysis and document verification ensured users met legal age requirements.

For regions where strict KYC wasn’t feasible, privacy-friendly selfie-based estimation was introduced.

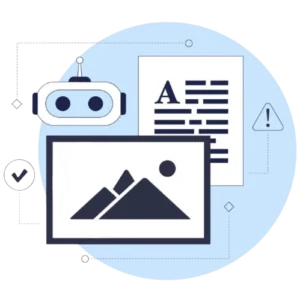

🧹 AI-Powered Content Moderation

Custom NLP models analyzed scripts before video generation, detecting:

- Explicit content

- Harmful narratives

- Policy violations

This prevented misuse at the input stage itself.

🧬 Avatar Similarity Detection

Facial recognition systems compared generated avatars with a global database of public figures.

Any avatar with high resemblance was automatically flagged, reducing the risk of:

- Deepfakes

- Defamation

- Identity misuse

📊 Behavior Monitoring and Abuse Prevention

An AI-driven monitoring system tracked user behavior patterns.

It helped identify:

- Multiple account creation

- Rapid content generation

- Repeated policy violations

Suspicious users were automatically flagged, blocked, or moved into re-verification flows.

🔐 Security and Infrastructure Enhancements

To strengthen the platform further:

- Rate limiting and bot detection were implemented

- APIs and endpoints were secured

- Content pipelines were encrypted to prevent leaks

⚙️ Technology Stack

- AI/ML Models for NLP moderation

- Facial Recognition APIs

- Behavior Analytics Engine

- Secure Cloud Infrastructure

- Bot Detection Systems (reCAPTCHA + biometrics)

🔄 AI Platform Risk vs Safety Transformation

A Clear Shift from Risk to Control

| Area | Before Safeguards | After Safety Implementation |

|---|---|---|

| Age Verification | No reliable validation system | AI-based age verification (<1% underage) |

| Content Moderation | Reactive / guideline-based | NLP-powered pre-generation filtering |

| Explicit Content Attempts | High risk of misuse | Reduced by 98% |

| Avatar Misuse (Deepfake Risk) | No strong detection | Fully eliminated with similarity checks |

| Repeat Offenders | Frequent re-registrations | Reduced by 85% |

| Bot & Abuse Activity | Limited detection | Behavior monitoring + automated blocking |

| Platform Security | Vulnerable endpoints | Encrypted pipelines + rate limiting |

| Platform Trust | At risk due to misuse | Improved to 9.2/10 |

📈 The Results: From Risk to Reliability

Once these systems were in place, the impact was clear and measurable:

- Underage user accounts reduced to less than 1%

- Repeat offender re-registrations reduced by 85%

- Explicit content generation attempts reduced by 98%

- Celebrity and public figure avatar misuse eliminated

- Platform trust score improved to 9.2/10

These improvements didn’t just solve internal issues—they enabled new growth opportunities, especially with enterprise clients that require strict compliance.

🎯 The Bigger Lesson for AI Platforms

This isn’t just one platform’s story—it reflects a broader reality in the AI industry.

Scaling AI without safety doesn’t create growth—it creates risk.

Key lessons:

- Safety systems must be built early, not added later

- Prevention is more effective than moderation after the fact

- Trust is becoming a core competitive advantage

- Responsible AI is no longer optional—it’s expected

🧾 Final Thoughts

AI platforms are entering a phase where control, compliance, and trust define success.

The ability to scale responsibly will separate sustainable platforms from risky ones.

Because in the long run, it’s not just about what AI can create—

it’s about what it should be allowed to create.

If you’re facing challenges around:

- AI misuse

- Content moderation

- Compliance and security

Now is the time to build a strong foundation for safe and scalable growth.

💡 Frequently Asked Questions

AI guardrails are systems that control how AI behaves by preventing misuse, enforcing policies, and ensuring compliance. They help stop harmful or illegal outputs before they are generated. Without guardrails, AI platforms can quickly become risky and unreliable.

Without safeguards, AI platforms can be misused for generating harmful content, deepfakes, or violating regulations. This leads to legal issues, reputational damage, and loss of user trust. As the platform scales, these risks grow exponentially.

AI content moderation uses machine learning models, especially NLP, to analyze user input before content is generated. It detects harmful, explicit, or policy-violating language in real time. This ensures that unsafe content is blocked at the source.

Deepfake risk refers to the misuse of AI to create realistic but fake representations of real people. This can lead to identity misuse, misinformation, and legal consequences. Preventing it requires facial recognition and similarity detection systems.

AI platforms prevent misuse by combining content moderation, behavior monitoring, and access control systems. Suspicious activities like repeated violations or bot behavior can be automatically flagged or blocked. This creates a safer ecosystem for all users.

Age verification ensures that users meet legal requirements to access certain AI features or content. Without it, platforms risk non-compliance with global regulations. It also helps protect younger users from inappropriate content exposure.

Behavior-based monitoring tracks user actions to detect unusual or suspicious patterns. It helps identify bots, repeat offenders, or attempts to bypass restrictions. This allows platforms to take proactive action before misuse escalates.

AI enhances security by detecting anomalies, automating threat detection, and strengthening system defenses. It can identify patterns that humans might miss and respond in real time. This makes platforms more resilient against attacks and abuse.

No, scaling without moderation leads to uncontrolled risks, including misuse and compliance failures. As user activity increases, manual control becomes impossible. Automated safety systems are essential for sustainable and secure growth.

The future of AI platforms lies in balancing innovation with responsibility and control. Companies are increasingly investing in safety, compliance, and ethical AI practices. Platforms that prioritize trust will have a competitive advantage.